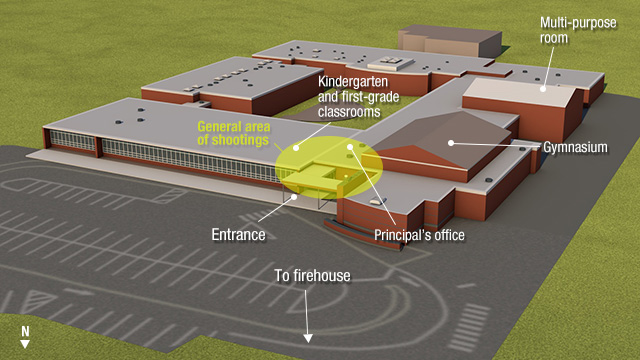

The last one was seen recently with the police shooting in Ferguson, Missouri, where Officer Darren Wilson fatally shot and killed 18-year old, Michael Brown -- in addition to the precious loss of life of a young man, there were repercussions from riots to citizens' loss of confidence in the police itself.

DECISION MAKING IN LIFE THREATENING SITUATIONS

Research in evolutionary psychology and cognitive science show the underlying reasons as to why we humans act in a preemptive manner (use force), particularly when life and limb are at stake, even before all the facts are ascertained. They are:- Time pressure

- Physical survival under threat (or) loss of property

- (1 & 2 causing) Danger-induced emotional arousal and biased decision making that favors self preservation.

Our base instincts pertaining to self preservation and aggression (including quenching hunger, sexual drive, bowel and bladder functions), are largely governed by the primitive or reptilian brain. Whereas mental processes that concern higher-order thinking and symbolic manipulation, say, composing music or reading a map, operate in the rational brain.

So in other words, we the homosapiens, the supposed "Wise Man" are not really WISE when it is to do with decision making when survival or self preservation are at stake.

Furthermore, when it is a matter of survival, we would rather assume that the perceived threat is true (or a positive), in the spur of the moment, even if turns out to be false after examination or later reflection.

Why? It is better to be wrong than to be sorry (after the fact, say, injury or death).

Evolutionary psychologists call it the "Snake in the Grass Effect." For example, if we were walking in the woods and get a feeling that something is rubbing on our shin, our non-conscious, reptilian brain makes us jump back even before we get a chance to determine the source for that feeling. Later examination might reveal that we just happened to rub our shin on the bark of a tree giving us that "scaly feeling"! Thus, the "Snake in the Grass Effect."

If in reality that "scaly feeling" turned out to be a tree bark that caused us to jump back in alarm, then, it was a false positive; however, regardless of the error, we have not lost a thing. Perhaps our heart rate and stress hormones levels momentarily elevated due to the hardwired flee or fight response. On the other hand, what it if that "scaly feeling" really happened to be snake? And it is quite possible that on that rare occasion, it might have well turn out to be a real rattle snake with scales! (True Positive). Jumping back in alarm, may actually have helped us survive!

Snake in the Grass Effect

SURVIVAL: DECISION MAKING ON THE POLICING BEAT

How does all this play into policing and decision making?Police officers are human, too, and succumb to the same decision making processes described above that are governed by the reptilian brain and false positives (snake in the grass effect). Furthermore, their decision making maybe affected due to implicit biases when a suspected person belongs to another racial or ethnic category. Alas, that is how the brain is wired given its evolutionary history.

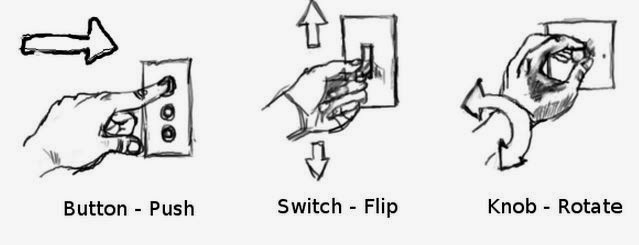

BUT, this is no excuse for police officers to open fire on innocent citizens. To prevent this, police departments have policies such as Use of Force Continuum (picture below), as to when the use force is appropriate and, thus, can be escalated. (A recent addition are body-worn cameras to deter the officer from unwarranted use of force.)

The classic definition for the philosophy of policing, which drives much of training and policing practice in the US is informed by the scholar Egon Bittner's (1985) classic paper*. He observed:

"The police are best understood as a mechanism for distributing nonnegotiable coercive force in accordance with an intuitive grasp of situational threats to social order. This definition of the police role presents a difficult moral problem; setting the terms by which a society dedicated to peace can institutionalize the exercise of force...."But how does a police officer, in high stakes situations, get an intuitive grasp of situation threats? And how does one prevent false positives, particularly when transitioning from Level Four use of force to Level Five. And, in practical terms, under stressful situations, when danger-induced emotional arousal (reptilian brain), drives much of cognition, is it even possible to recall the Use of Force Continuum?

These questions need to be asked and researched and solutions developed by taking a multi-pronged approach in the following areas:

- Selection and recruitment procedures of police officers (by taking into consideration individual profiles (psychological and personality attributes); and appropriate screening to determine whether a candidate has innate or maladaptive cognitive and physical abilities for policing).

- Police training curriculum and methods (techniques and simulations to impart knowledge, skills, abilities to tamp down hardwired responses such as the "Snake in the Grass Effect").

- Policies, procedures and protocols (on use of force; buddy-system; back-ups).

- Technologies that monitor and/or augment officers' contextual-intelligence (person & place) and real-time situational awareness.

Would having unarmed police officers conduct community policing reduce the TOTAL number of unwarranted killings -- loss of lives -- of both Citizens and Police Officers?

I am not sure what the answer would be. Because, it is unacceptable for any loss of innocent life, be that of an officer or a citizen.

The take-away message is policing requires men and women with extraordinary capabilities and skillsets in multiple dimensions. They not only need physical strength, but also wit and wisdom on the fly. In other words, they need to be real HOMOSAPIENS, a.k.a., "the Wise Man" that we are capable of being when our rational brain is operational. What can and should be done by policy makers, researchers, recruiters, trainers, commanders, and actual policing practice, so that we have "wise men and women" police officers on the ground? And, more importantly, can it be realized in policing culture and practice quickly enough to prevent the next Ferguson?

But by asking the above question, I raise a plausible solution (pointers, really) in terms of officer recruitment, training, police comms. & computing technology and policy. Because from a human factors standpoint an unarmed police officer should have built-up extraordinary abilities to diffuse a situation, without the use of force. In other words, our hypothetical unarmed police officer needs to have the following:

- high level of skills in communications (persuasion/dissuasion, body & verbal language);

- expertise in naturalistic decision making (ability to quickly discern the type of situation, then engage or disengage from person & incident -- particularly in an one-on-one situation where there is uncertainty about the level of threat and the suspect's desire to inflict bodily harm on the police officer);

- augmenting pre-engagement decision making with technology (sensors, warnings, pre-engagement alerts) that enhance contextual intelligence and situational awareness and enables the right go/no-go decision;

- Socio-psychological abilities (command presence, language, tone of voice, community engagement) & physical fitness and expertise in martial arts

The take-away message is policing requires men and women with extraordinary capabilities and skillsets in multiple dimensions. They not only need physical strength, but also wit and wisdom on the fly. In other words, they need to be real HOMOSAPIENS, a.k.a., "the Wise Man" that we are capable of being when our rational brain is operational. What can and should be done by policy makers, researchers, recruiters, trainers, commanders, and actual policing practice, so that we have "wise men and women" police officers on the ground? And, more importantly, can it be realized in policing culture and practice quickly enough to prevent the next Ferguson?

About the author:

Moin Rahman, is a Principal Scientist at HVHF Sciences, LLC. He specializes in:

"Designing systems and solutions for human interactions when stakes are high, moments are fleeting and actions are critical."

For more information, please visit:

http://www.linkedin.com/in/moinrahman

HVHF Article Archive: http://hvhfsciences.blogspot.com/

HVHF Article Archive: http://hvhfsciences.blogspot.com/

E-mail: moin.rahman@hvhfsciences.com